# Assuming the step one virtual environemnt is set up and actiavted and ready in the terminal, run the following commands to install the classifai package

## PIP

#!pip install "https://github.com/datasciencecampus/classifai/releases/download/v0.2.1/classifai-0.2.1-py3-none-any.whl"

## UV

#!uv pip install "https://github.com/datasciencecampus/classifai/releases/download/v0.2.1/classifai-0.2.1-py3-none-any.whl"Creating Your Own Vectoriser

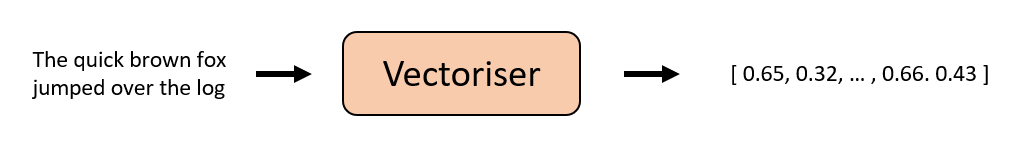

ClassifAI is a tool to help in the creation and serving of searchable vector databases, for text classification tasks.

It has three core components:

- Vectorisers - Models for converting text to vectors

- Indexers - Classes for building VectorStores from text datasets, which you can search

- Servers - Allow you to deploy VectorStores with a Rest-API interface

This notebook showcases how to create your own custom Vectoriser - we already provide several out-of-the-box Vectoriser classes for converting text to embeddings that essentially provide shortcuts for several common methods i.e. gcloud embedding services, Huggingface models, and Ollama.

But what if you wanted to make your own custom embedding vectoriser model, that uses your own fancy embedding method….

In this Notebook…

We will show: * The core workings of the Vectotiser Class and its responsibilities * How to create a custom One-Hot Encoding Vectoriser that will integrate seamlessly with the rest of ClassifAI * The custom One-Hot Encoding Vectoriser being used with the Indexer module to create and search a VectorStore

Installation (pre-release)

Classifai is currently in pre-release and is not yet published on PyPI.

This section describes how to install the packaged wheel from the project’s public GitHub Releases so that you can follow through this DEMO and try the code yourself.

1) Create and activate a virtual environment in command line

Using pip + venv

Create a virtual environment:

python -m venv .venvUsing UV

Create a virtual environment:

uv venvActivate the created environment with

(macOS / Linux):

source .venv/bin/activateActivate it (Windows):

source .venv/Scripts/activate2) Install the pre-release wheel

Using pip

pip install "https://github.com/datasciencecampus/classifai/releases/download/v0.2.1/classifai-0.2.1-py3-none-any.whl"Using uv

uv pip install "https://github.com/datasciencecampus/classifai/releases/download/v0.2.1/classifai-0.2.1-py3-none-any.whl"Note! :

You may need to install the ipykernel python package to run Notebook cells with your Python environment

#!pip install ipykernel

#!uv pip install ipykernelIf you can run the following cell in this notebook, you should be good to go!

from classifai.vectorisers import VectoriserBase

print("done!")Demo Data

This demo uses a mock dataset that is freely available on the ClassifAI repo, if yo have not downloaded the entire DEMO folder to run this notebook, the minimum data you require is the DEMO/data/testdata.csv file, which you should place in your working directory in a DEMO folder - (or you can just change the filepath later in this demo notebook)

The Vectoriser

As seen above, a Vectorisers’ sole responsibility is to convert text to a vector representation. Each Vectoriser class must implement a transform() method that will:

- accept a string or list of N strings as an argument

- return a numpy array of dimension [N,Y] where N matches the number of input strings, and Y is the embedding dimension)

By enforcing this, the Indexers and Servers modules can reliably work with any Vectoriser object to perform the various search/classification functions required by ClassifAI.

All a developer has to consider when building their own Vectoriser is the logic of this transform() method.

Lets build an One-Hot-Encoding Vectoriser

One-hot encoding is a simple method of converting from text to vector format. Each element in a one-hot encoded vector \((v)\) represents the presence or absence of a particular word in a large vocabulary. Therefore the length of the vector represents the size of the vocabulary. Each word in a sentence is transformed so that the corresponding positional elements in the one-hot encoding vector will be set to 1. So, all sentences that contain the word ‘dog’ should all have the same element, \(v_i\), of their vectors set to 1, which is the element that represents the presence of the word dog. Finally, if the converted sentence only contains 5 words, then the vector will only have 5 non-zero elements at most.

# we're going to use scikit learns countvectoriser to create our one hot embeddings - install in the terminal or uncomment the below code

# !pip install scikit-learn# One-hot encoding vectoriser implememntation.

# 1. Class must inherit from the VectoriserBase class.

# 2. The class must implement the transform method.

# importing sklearns CountVectorizer, a common library tool for vectorisation.

import numpy as np

from sklearn.feature_extraction.text import CountVectorizer

class OneHotVectoriser(VectoriserBase):

def __init__(self, vocabulary: list[str]):

if not vocabulary:

raise ValueError("Vocabulary cannot be empty.")

self.vectorizer = CountVectorizer(binary=True, vocabulary=vocabulary)

def transform(self, texts: str | list[str]) -> np.ndarray:

# checking if the input is a string and converting to a list if so

if isinstance(texts, str):

texts = [texts]

# we add some light type checking to make sure the input is correct

if not isinstance(texts, list):

raise TypeError("Input must be a string or a list of strings.")

# Generate one-hot encodings using the CountVectorizer from Scikit-learn

one_hot_matrix = self.vectorizer.transform(texts).toarray()

return one_hot_matrixWe’ve written our custom vectoriser to accept a vocabulary as an argument during instantiation.

You could hardcode a specific vocabulary, but doing it this way would make our class reusable so that we could instantiate different OneHotVectorisers with different vocabularies

We’re going to download and use Google’s 10,000 most common words - a very well known file - as our vocab for our first custom one hot encoder model.

import os

import requests

### First we need to download the vocabulary

# Check if the file already exists locally

if not os.path.exists("google-10000-english.txt"):

# download file

url = "https://raw.github.com/first20hours/google-10000-english/master/google-10000-english.txt"

# Path to save the file locally

output_file = "google-10000-english.txt"

# Download the file

response = requests.get(url, timeout=10)

if response.status_code == requests.codes.ok:

with open(output_file, "w") as file:

file.write(response.text)

print(f"File downloaded and saved as {output_file}")

else:

print(f"Failed to download file. HTTP Status Code: {response.status_code}")

# Load vocabulary from the downloaded file

with open("google-10000-english.txt") as file:

vocabulary = [line.strip() for line in file.readlines()]

print(f"Vocabulary loaded with {len(vocabulary)} words.")

# Now we can create an instance of the OneHotVectoriser with the loaded vocabulary# Now we can create an instance of the OneHotVectoriser with the loaded vocabulary

first_onehot_vectoriser = OneHotVectoriser(vocabulary=vocabulary)Thats it! Lets try it out by passing some text to the transform method!

Lets verify a few things, and ensure that the input accepts texts and lists of texts. and also that its returning the right numpy arrays!

vector_one = first_onehot_vectoriser.transform("The quick brown fox jumped over the log")

print(f"One-hot vector shape: {vector_one.shape}")

print(f"One-hot vector type: {type(vector_one)}")

print("-----")

print(vector_one)# we can also see how many elements of the vector are non-zero (should be equal to the number of unique words in the input text)

print(f"Number of non-zero elements in the vector: {np.count_nonzero(vector_one)}")# We can also pass a list of strings to the transform method

vector_two = first_onehot_vectoriser.transform(

["The quick brown fox jumped over the log", "Slow and steady wins the race"]

)

print(f"One-hot vector shape: {vector_two.shape}")

print(f"One-hot vector type: {type(vector_two)}")

print("-----")

print(vector_two)Lets Use One-Hot-Encoding Vectoriser to create a VectorStore!

If you followed along with the code above, you should now have a custom vectoriser in memory and it should be fully compatiable with the rest of the Package.

We are now going to steal the second section of the oo_prototype_demo.ipynb script and build a VectorStore with the ‘data/testdata.csv’ file.

from classifai.indexers import VectorStore

my_vector_store = VectorStore(

file_name="data/testdata.csv",

data_type="csv",

vectoriser=first_onehot_vectoriser, # or switch to the GcpVectoriser if you have it :)

batch_size=10,

overwrite=True,

)We’ve build a vector store! Now to search it with a query

We first need to formt our input data to the VectorStore’s search() method. We want our data in a VectorStoreSearchInput data object:

The different method of our VectorStore expect these dataclass objects, in order to validate the data being passed to the VectorStore

from classifai.indexers.dataclasses import VectorStoreSearchInput

input_data = VectorStoreSearchInput({"id": [1], "query": ["places with a vast delta"]})

input_dataonehot_search_results = my_vector_store.search(input_data)

onehot_search_resultsThat’s it!

That’s everything these is to it. By implementing the transform method that

- Accepts strings or a list of srings,

- Returns a numpy array

we can create our own custom vectoriser such as the one-hot encoding model shown here. Check out the other DEMO notebooks to see how use the Vectorstore and Vectorisers in other ways and how to deploy your search system over a RestAPI service :)